|

| MyConnection Server Customer Experience is Everything |

| You are here: Visualware > MyConnection Server > Resources > Restricted Connection Capacity by a Policy Based Event |

Solving Network Problems with MCS |

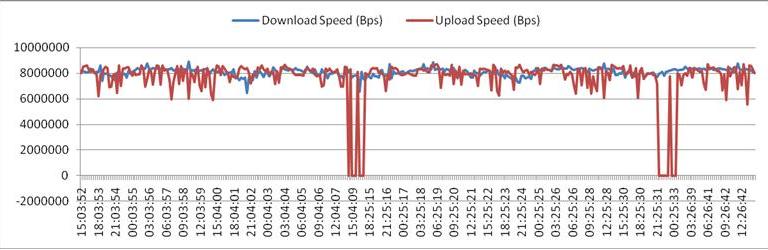

Network assessment testing for this analysis was performed with MyConnection Server and the Access Series Quality Assessment Point appliance. Connection tests were performed every 15 minutes over several days to capture network capacity and performance over time and during both peak activity periods and low activity period. While the connection had consistent capacity, as evidenced below by the steady download speeds (the upload speeds in red show 2 outages which are likely due to an outside anomaly).

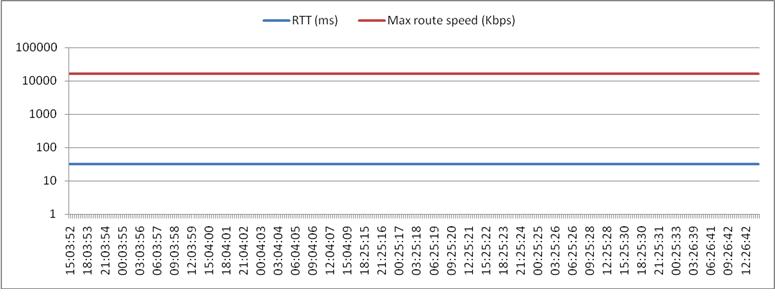

The trip time is a constant 32ms which should sustain a 16Mbps rate, however the throughput is exactly half that at 8Mbps (as shown in Fig. 1 above). The max route speed (Fig. 2) indicates the maximum speed that should be attained if the latency is the only issue, in this case the max speed is 16Mbps. This pattern is similar for both the upload and the download speeds.

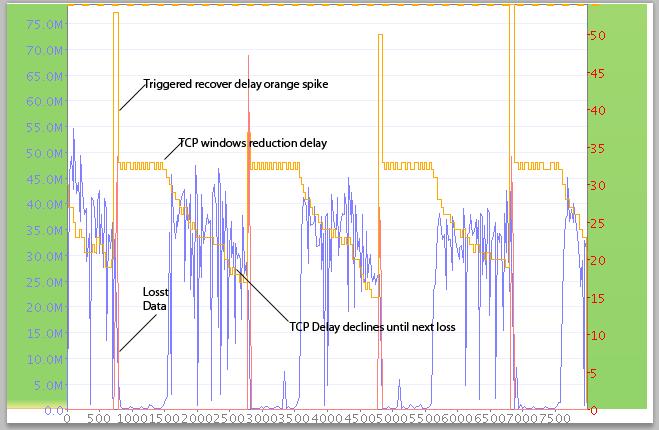

Further investigation finds that very test shows the same pattern, clearly visible in the graph below. The download speed (in blue) is pushing 45Mbps, most probably a DS3 pipe, but hits data loss (in red) resulting in a 50ms inherent delay. TCP reduces window payload to compensate delay, in this case TCP compensates from 16ms to 33ms (in brown) then the data loss repeats. The data loss is occurring precisely every second, which indicates that the data loss is being triggered by some policy based event. The constant pattern indicates the problem occurs by design — reduced capacity due to network congestion (and thus not by design) would show a far more erractic pattern. The reduction in download speed, while not part of the policy based event itself, occurs as a result of the lost data caused by the event.

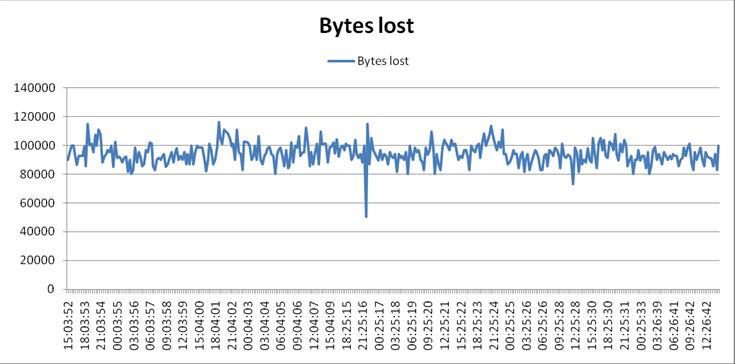

The loss is so regular that the speed graph (Fig. 1) and the lost data graph (Fig. 4) are a close match. The delay accounts for the lost throughput for the 32ms trip.

The bottom line is the trip latency should dictate a window delay of 16-20ms, however the data loss pushes this to 32-50ms causing the drop in throughput. The drop occurs at regular 2 second intervals, this is clearly a network design policy somewhere as every test shows identical results. Next steps would include a review of the policy settings for relevant devices (routers, switches, etc.). |

| See more examples of solving network problems with MCS |